Welcome

This book exists because the main README was getting unwieldy, and nobody wants to scroll through a novel to find where they left off.

The whole point here is simple: learn AI without becoming dependent on AI to do the learning for you. The difference is that one day you'll be able to create something original, and the other day you'll starve when the delivery apps go down.

What You'll Find Here

The wiki is organized into learning modules that build upon each other, because that's how knowledge works, despite what the internet may lead you to believe. Each section includes the theory you need to understand, the math you can't skip, and projects that will make you question your life choices before making you slightly more competent.

There are study notes from me, wisdom borrowed from anonymous Reddit philosophers who've graciously shared their hard-won insights (most hide behind usernames like "DeepLearningDegenerate42," hoping their future employers never connect the dots), project documentation that chronicles every spectacular failure and face-palm moment, and resources. Books get dissected, YouTube videos face the guillotine of honest critique, and articles are put through the ringer of actual usefulness rather than just dumped into yet another "47 Essential AI Channels" listicle that reads like everyone copied-pasted from the same source.

The goal here isn't just technical competence, though that's important unless you enjoy being professionally irrelevant. This is about becoming a more complete human being who can navigate both code and conversations, debug algorithms and personal relationships, optimize neural networks and life decisions. Consider me your slightly unhinged life coach who happens to believe that learning to think clearly about artificial intelligence somehow makes you better at thinking clearly about everything else.

Introduction: Learning AI the Hard Way

Here's the thing about learning AI: everyone wants to skip to the fun part. They want to prompt ChatGPT to write their neural networks while they sit back and pretend they understand what's happening under the hood. That's not learning, that's just expensive copy-paste.

This roadmap takes a different approach. We're going back to fundamentals, working through problems by hand, and building understanding from the ground up.

Why the Hard Way Works

When you struggle through a calculus derivation with pencil and paper, something clicks that never happens when you watch someone else do it. When you implement gradient descent from scratch instead of calling a library function, you understand why learning rates matter and what those loss curves actually mean.

The AI field moves fast, frameworks change, and new architectures appear monthly. But the mathematical foundations remain the same. Linear algebra doesn't suddenly become obsolete because a new transformer variant drops. Understanding these fundamentals gives you the flexibility to adapt and the insight to see through the hype.

The Rules

No AI assistants during learning. Your calculator is your most advanced tool, and even that should be used sparingly. When you're implementing backpropagation, do it step by step. When you're debugging, use print statements and think through the logic. When you're stuck on a concept, work through examples until it makes sense.

This isn't about making things unnecessarily difficult. It's about building genuine understanding that will serve you when the easy tools fail or when you need to go beyond what existing libraries can do.

What You'll Gain

By the end of this journey, you'll be able to read research papers and understand what's actually novel. You'll debug machine learning systems by reasoning about the underlying math. You'll propose new architectures because you understand how existing ones work. Most importantly, you'll be able to teach others, which is the ultimate test of understanding.

The goal isn't to reject modern tools forever. It's to earn the right to use them effectively by first understanding what they do and why they work. Once you've built a neural network from scratch, using PyTorch becomes a conscious choice rather than cargo cult programming.

Getting Started

This roadmap is organized as a dependency graph. Some topics build directly on others, while some can be learned in parallel. The priority system helps you focus on what's essential versus what's nice to have.

Start with the mathematical foundations even if they seem boring. They're the language everything else is written in. Move through programming fundamentals to build your implementation skills. Then gradually work your way up to the cutting-edge stuff.

Take your time. Understanding is more valuable than speed. Work through the exercises, implement the algorithms, and don't move on until things make sense. Your future self will thank you when you're debugging a production ML system at 2 AM and you actually understand what's going wrong.

The journey is long, but that's what makes it worthwhile. Anyone can use pre-trained models. Not everyone can understand how they work or improve them. Choose which person you want to be.

Universal Learning Priority Legend

Not all knowledge is created equal. Some concepts are foundational pillars that everything else builds on, while others are nice-to-have extras that can wait until later. This priority system helps you focus your energy where it matters most.

Priority Levels

Must Learn - These are the non-negotiables. Skip these and you'll hit walls everywhere. Linear algebra, basic calculus, Python fundamentals, and core machine learning concepts all fall here.

Recommended - These topics will make your life significantly easier and your understanding much deeper. They're not absolutely essential to get started, but they fill in important gaps and connect concepts together. Things like advanced SQL, proper Git workflow, and statistical inference live in this category.

Optional - The cherry on top. These are specialized knowledge areas, advanced techniques, or tools that serve specific purposes. They're genuinely useful, but only after you've mastered the fundamentals. Quantum machine learning, advanced DevOps, and cutting-edge research topics fit here.

How to Use This System

Start with the Must Learn topics in any area before moving on. If you're working on machine learning, nail down your linear algebra and basic statistics before diving into neural networks. If you're setting up your development environment, get comfortable with the command line and basic Git before exploring advanced containerization.

The Recommended items can often be learned in parallel with Must Learn topics or shortly after. They reinforce and extend your core knowledge. Don't feel like you need to complete everything at one level before touching the next, but don't skip the foundations either.

Optional topics are for when you have specific needs or interests. Maybe you're working on a project that requires time series analysis, or you're curious about the theoretical underpinnings of optimization. These can be motivating to explore, but don't let them distract you from building solid fundamentals.

Context Matters

Your specific goals might shift these priorities. If you're joining a team that uses Kubernetes heavily, that Optional DevOps knowledge might become Must Learn for you. If you're doing computer vision research, advanced mathematical topics move up in priority.

The key is being honest about where you are and what you actually need. It's tempting to jump to the exciting advanced stuff, but the fundamentals are what separate people who can use AI tools from people who can build and improve them.

Remember, this is a marathon, not a sprint. Focus on understanding over coverage, and don't worry about keeping up with every new development in the field. The fundamentals will serve you for decades, while the latest framework might be obsolete next year.

The Math

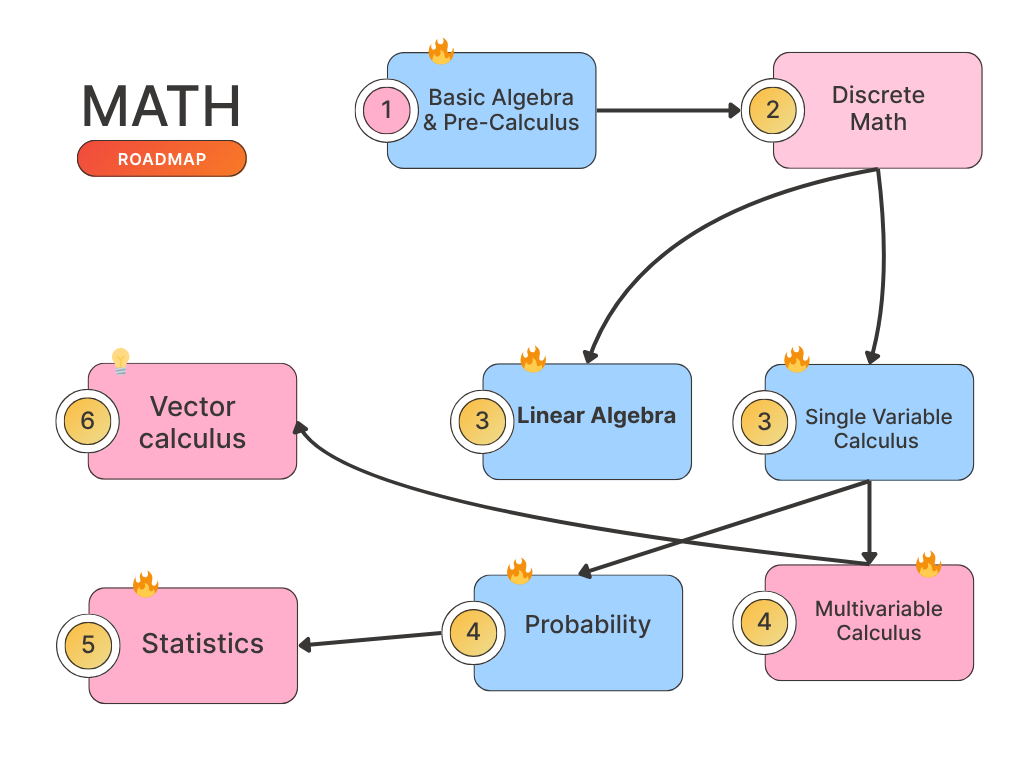

Here’s how to learn math. Same-number topics? No need to go in order, study them in parallel, no stress. Flow with it.

Basic Algebra & Pre-Calculus

This is where your mathematical foundation begins. If you're thinking "I already know algebra," great, but take a moment to make sure you really understand these concepts at a level that will support everything that comes after.

Resources

I recommend watching only Professor Leonard and study and solve all problems Calculus I with integrated Precalculus book

| Resource | Type | Cost | Link |

|---|---|---|---|

| Khan Academy Algebra | Interactive Course | Free | khanacademy.org |

| Paul's Online Math Notes | Reference/Tutorial | Free | tutorial.math.lamar.edu |

| Professor Leonard | Video Lectures | Free | YouTube |

| OpenStax Algebra & Trigonometry | Textbook | Free | openstax.org |

| PatrickJMT | Video Tutorials | Free | YouTube |

| Wolfram Alpha | Problem Solver | Free/Paid | wolframalpha.com |

| Calculus I with integrated Precalculus | Book | Free/Paid | READ CHAPTER 0 |

Why This Matters for AI

Machine learning is fundamentally about finding patterns in data using mathematical relationships. Every neural network weight update, every optimization step, every statistical model relies on algebraic manipulation. When you see a research paper with equations, this is the language they're written in.

More practically, you'll be constantly working with variables, solving for unknowns, and manipulating expressions. When your gradient descent isn't converging, you need to understand what's happening algebraically to debug it.

Core Algebraic Concepts

Start with equation solving. You should be comfortable isolating variables, dealing with multiple variables, and understanding what it means for equations to have no solution, one solution, or infinitely many solutions. This translates directly to understanding when machine learning problems are well-posed.

Polynomial manipulation comes up everywhere. Factoring, expanding, and working with quadratic equations aren't just academic exercises. Many activation functions are polynomials, regularization terms involve polynomial expressions, and optimization landscapes often have polynomial characteristics.

Functions and Their Behavior

Understanding functions deeply is crucial. You need to know what domain and range mean, how to compose functions, and how functions transform inputs to outputs. Machine learning models are just complex functions that map inputs to predictions.

Exponential and logarithmic functions show up constantly in AI. The sigmoid activation function is based on exponentials. Cross-entropy loss uses logarithms. Information theory, which underlies much of modern AI, is built on logarithmic relationships.

Trigonometric functions matter more than you might expect. They're not just for calculating triangles. Fourier transforms use sine and cosine functions to analyze signals and images. Many periodic patterns in data can be understood through trigonometric functions.

Graphical Understanding

Being able to sketch and interpret graphs is essential. You need to understand how changing parameters affects function shape, where functions increase or decrease, and how to identify key features like maxima and minima. This intuition will serve you well when interpreting loss curves and understanding optimization landscapes.

Learn to read information from graphs quickly. Can you tell when a function is increasing or decreasing? Can you identify where it might have derivatives of zero? Can you understand the relationship between multiple functions plotted together?

Common Pitfalls

Don't rush through this thinking it's too basic. Many people who struggle with calculus and linear algebra actually have gaps in their algebraic foundations. Make sure you can manipulate expressions confidently before moving on.

Pay attention to the logical structure of mathematical arguments. Understanding why steps are valid, not just how to execute them, builds the reasoning skills you'll need for more advanced topics.

Practice working without a calculator when possible. You need to develop number sense and be comfortable with approximations.

Moving Forward

Once you're solid on these foundations, you'll be ready for the mathematical tools that directly power machine learning. But don't skip this step. The time you invest here will pay dividends throughout your entire AI journey.

If you find yourself struggling with later mathematical concepts, often the issue traces back to algebraic manipulation or function understanding. Having these skills locked down gives you the confidence to tackle more complex mathematical machinery.

Logic & Proofs

Boolean Algebra

Propositional Logic

Sets & Functions

Relations

Equivalence Relations

Graph Theory

Trees & Networks

Algorithms on Graphs

Combinatorics

Counting Principles

Permutations & Combinations

Single Variable Calculus

Calculus is the math of change, and machine learning is all about optimization which is finding the best way to change model parameters. You can't understand gradient descent, backpropagation, or most ML algorithms without solid calculus fundamentals.

Resources

| Resource | Type | Cost | Link | Notes |

|---|---|---|---|---|

| 3Blue1Brown Calculus | Video Series | Free | YouTube | Best intuitive introduction available |

| Khan Academy Calculus | Interactive Course | Free | khanacademy.org | Solid practice problems and explanations |

| MIT OCW 18.01 | Full Course | Free | ocw.mit.edu | Rigorous treatment with problem sets |

| Paul's Online Math Notes | Reference | Free | tutorial.math.lamar.edu | Great for quick lookups and examples |

| Professor Leonard | Video Lectures | Free | YouTube | |

| Calculus I with integrated Precalculus | Book | Paid | Book link |

What You Need to Know

The core concepts are limits, derivatives, and integrals. Limits help you understand what happens at boundary cases. Derivatives tell you how fast things change. Integrals let you accumulate change over time or space.

For ML specifically, you need to understand what a derivative represents geometrically and how to compute them for common functions. Chain rule is absolutely critical since neural networks are compositions of functions.

Integration becomes essential for probability theory and more advanced topics.

The Big Picture

Derivatives are the slope of a curve at any point. When training neural networks, you're constantly asking "which direction should I adjust this parameter to reduce the error?" That's exactly what gradients tell you.

Don't get bogged down in integration techniques initially. Focus on understanding what derivatives mean and how to compute them confidently.

Derivatives

Derivatives are the foundation of machine learning optimization. Every time a neural network learns, it's using derivatives to figure out how to adjust its parameters.

Resources

Same as previous page

I recommend watching only Professor Leonard and study and solve all problems Calculus I with integrated Precalculus book

| Resource | Type | Cost | Link | Notes |

|---|---|---|---|---|

| 3Blue1Brown Calculus | Video Series | Free | YouTube | Best intuitive introduction available |

| Khan Academy Calculus | Interactive Course | Free | khanacademy.org | Solid practice problems and explanations |

| MIT OCW 18.01 | Full Course | Free | ocw.mit.edu | Rigorous treatment with problem sets |

| Paul's Online Math Notes | Reference | Free | tutorial.math.lamar.edu | Great for quick lookups and examples |

| Professor Leonard | Video Lectures | Free | YouTube | |

| Calculus I with integrated Precalculus | Book | Paid | Book link |

The Core Concept

A derivative measures how much a function changes when you change its input by a tiny amount. If f(x) is your function, f'(x) tells you the slope of the curve at point x.

In ML terms: if your loss function is L(w) where w is a weight, then L'(w) tells you how much the loss increases or decreases when you change that weight slightly.

Essential Rules

Calculus Cheat Sheet derivatives

Why This Matters

When you hear "gradient descent," that's just following derivatives downhill to minimize a function. When you see "backpropagation," that's computing derivatives using the chain rule through a network.

Understanding derivatives geometrically (as slopes) and algebraically (as limits) gives you the intuition to debug optimization problems and understand why learning algorithms work the way they do.

Integrals

Integrals accumulate change over intervals. While less immediately critical than derivatives for basic ML, they're essential for probability theory, statistics, and advanced AI topics.

Resources

Same as previous page I highly recommend watching only Professor Leonard and study and solve all problems Calculus I with integrated Precalculus book

| Resource | Type | Cost | Link | Notes |

|---|---|---|---|---|

| Professor Leonard | Video Lectures | Free | YouTube | |

| Calculus I with integrated Precalculus | Book | Paid | Book link | |

| 3Blue1Brown - Integration | Video | Free | YouTube | Excellent visual intuition |

| Khan Academy Integration | Interactive | Free | khanacademy.org | Comprehensive practice problems |

| Paul's Online Notes | Reference | Free | tutorial.math.lamar.edu | Clear explanations and techniques |

| Integral Calculator | Tool | Free | integral-calculator.com | Step-by-step solutions for checking work |

The Core Idea

An integral finds the area under a curve. If derivatives ask "how fast is this changing?", integrals ask "how much total change happened?"

In probability, integrals let you work with continuous distributions. In machine learning, they show up in expectation calculations, normalization constants, and theoretical analysis.

Basic Techniques

Fundamental theorem: integration and differentiation are inverse operations. If F'(x) = f(x), then ∫f(x)dx = F(x) + C.

AI Applications

Probability density functions integrate to 1. Expected values are integrals of x times the probability density.

Gaussian integrals show up everywhere in statistics and Bayesian methods. Many loss functions involve integral formulations.

You don't need to be an integration wizard initially, understanding the concept and being able to handle basic integrals is crucial for the mathematical foundations of AI.

Multivariable Calculus

Partial Derivatives

Multiple Integrals

Vector Fields

Gradient & Divergence

Curl & Del Operator

Line & Surface Integrals

Green's & Stokes' Theorems

Matrices & Determinants

Matrix Operations

Gaussian Elimination

Vector Spaces

Linear Independence

Basis & Dimension

Eigenvalues & Eigenvectors

Diagonalization

Singular Value Decomposition

Inner Products & Orthogonality

Gram-Schmidt Process

Matrix Decompositions

LU/QR/Cholesky

Tensor Algebra

Probability Theory

Random Variables

Distributions

Bayes' Theorem

Central Limit Theorem

Markov Chains

Statistics

Descriptive Statistics

Hypothesis Testing

Confidence Intervals

Regression Analysis

ANOVA

Optimization Theory

Convex Optimization

Gradient Descent

Lagrange Multipliers

Constrained Optimization

Linear Programming

Real Analysis

Complex Analysis

Differential Equations

Fourier Analysis

Information Theory

Terminal/Command Line Basics

Git Version Control

SSH & Key Management

Shell Scripting

tmux & Terminal Multiplexing

VS Code Setup

Vim/Neovim

Emacs/Spacemacs

Package Managers

pip & virtualenv

conda & mamba

System Package Managers

Containerization

Docker

Docker Compose

Kubernetes

IDE/Editor Setup

Debugger

Linting & Code Formatting

Testing Frameworks

Jupyter Notebook/Lab

API Testing Tools

Database Tools

GitHub Actions

Jenkins & CI/CD

Advanced DevOps & Monitoring

Programming Logic & Thinking

Variables & Data Types

Control Flow

Functions

Python

JavaScript

Java

C/C++

Other Languages

Basic Data Structures

Arrays/Lists

Strings

Dictionaries/Maps

Intermediate Data Structures

Stacks & Queues

Sets

Linked Lists

Trees & Graphs

Basic Algorithms

Searching Algorithms

Sorting Algorithms

Algorithm Complexity

OOP Fundamentals

Classes & Objects

Methods & Attributes

Encapsulation

Advanced OOP Concepts

Inheritance

Polymorphism

Abstraction

File Operations

Error Handling

Debugging Techniques

Code Organization

External Libraries

Package Management

Database Concepts

ACID Properties

Database Design

Basic SQL

Advanced SQL

Database Functions

Query Optimization

PostgreSQL

MySQL

SQLite

Database Administration

Document Databases

MongoDB

CouchDB

Key-Value & Column Stores

Redis

Apache Cassandra

Graph Databases

Neo4j

Data Warehousing

Big Data Technologies

Apache Hadoop

Apache Spark

Apache Kafka

Cloud Data Platforms

AI-Specific Storage

Vector Databases

Time Series Databases

Feature Stores

Data Manipulation & Analysis

NumPy

Pandas

Data Cleaning

Data Visualization

Matplotlib

Seaborn

Plotly

Exploratory Data Analysis

ML Concepts & Theory

Scikit-Learn Ecosystem

Linear & Logistic Regression

Decision Trees & Random Forest

Classification Metrics

Clustering & Unsupervised Learning

K-Means

Hierarchical Clustering

PCA & Dimensionality Reduction

Neural Network Basics

Perceptron

Activation Functions

Backpropagation

Deep Learning Frameworks

TensorFlow/Keras

PyTorch

JAX

Specialized Architectures

Convolutional Neural Networks

Recurrent Neural Networks

Transformers

Model Deployment

Model Monitoring

Advanced MLOps

Computer Vision

OpenCV

Image Processing

Object Detection

Natural Language Processing

Text Preprocessing

Word Embeddings

Named Entity Recognition

Reinforcement Learning

Q-Learning

Policy Gradient

Actor-Critic

Modern Neural Networks

Attention Mechanisms

Transformers

Self-Attention

Large Language Models

BERT Family

GPT Family

T5/UL2

Advanced Vision Models

Vision Transformer

CLIP

Generative Vision Models

Advanced Optimization

Advanced Training Techniques

Theoretical Foundations

Generative AI

GANs

VAEs

Diffusion Models

Multimodal AI

Advanced RL & Control

Research Methodology

Advanced Implementation

Industry & Impact

Frontier Research

Next-Generation AI

AGI Research

Technical Communication

Team Collaboration

Presentation Skills

Analytical Thinking

Creative Problem Solving

Decision Making

Business Acumen

Domain Expertise

Project Management

Learning Mindset

Adaptability

Teaching & Knowledge Sharing

Networking & Relationships

AI careers are built on connections as much as code. The field moves fast, opportunities spread through networks, and the best learning happens through conversations with other practitioners.

Resources

| Resource | Type | Cost | Link | Notes |

|---|---|---|---|---|

| Kaggle Forums | Community | Free | kaggle.com | Connect through competitions and discussions |

| Never Eat Alone | Book | Paid | Amazon | Classic networking strategies |

| AI Conference Slack/Discord | Communities | Free | Various | Join conference communities that persist year-round |

Building Professional Networks

Start with your current environment. Colleagues, classmates, and online course peers are your first network. They're learning similar things and facing similar challenges.

Attend local meetups and conferences when possible. Face-to-face connections are stronger than online ones. Don't just collect business cards, have genuine conversations about shared interests.

Industry events matter more than you think. NeurIPS, ICML, ICLR conferences have workshops and social events. Even if you can't attend in person, many have virtual networking components.

Internal Relationships

Your immediate team and company connections often matter most for day-to-day success. Build relationships across functions; data engineers, product managers, and business stakeholders all influence AI project success.

Be helpful before you need help. Share interesting papers, offer to review code, or explain concepts to colleagues. Generosity builds stronger professional relationships than pure networking tactics.

Social Media Presence

AI Twitter is surprisingly active and welcoming. Share your learning journey, comment thoughtfully on others' work, and contribute to discussions. Many job opportunities start with online visibility.

LinkedIn is essential for professional connections. Keep your profile current, share interesting projects, and engage with industry content. Recruiters actively search for AI talent there.

The Long Game

Relationships compound over time. Someone you meet at a meetup today might recommend you for a role in two years. Former colleagues become references, collaborators, and sources of opportunities.

Focus on quality over quantity. A few strong professional relationships beat hundreds of superficial connections. Be genuinely interested in others' work and challenges.